Casa del Libro on Twitter: "🎄 📚 ¿Quieres una #NavidadDeLibros? Estos son los librazos que no te pueden faltar estos Reyes. ¿Quieres llevártelos? 📍 HAZ RT 📍 SÍGUENOS 📍 MENCIONA 2 AMIGOS ¡

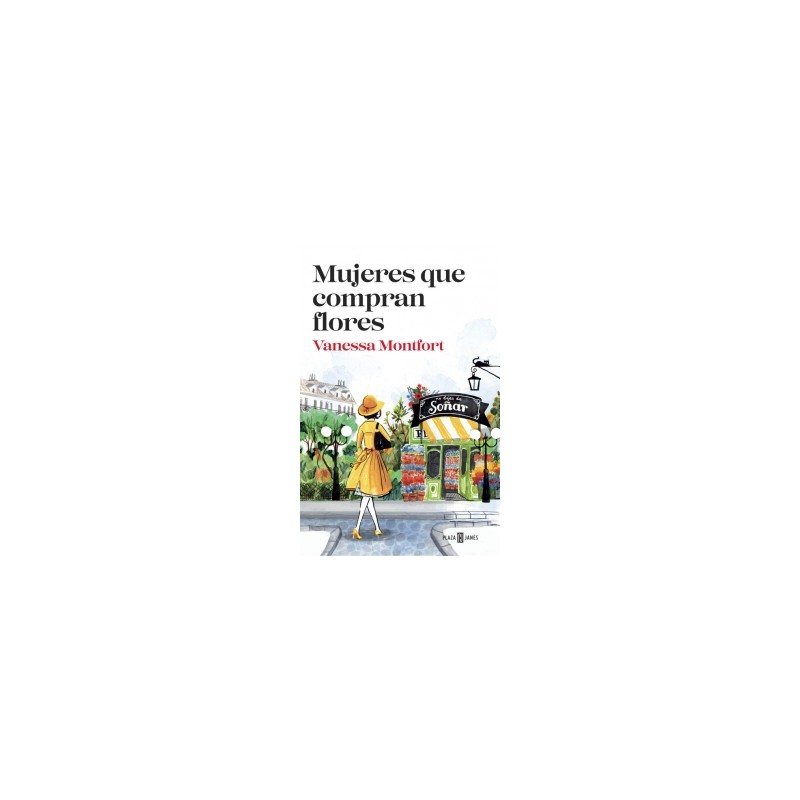

Mujeres que compran flores: una novela sobre la amistad, divertida y muy adictiva (y nuevo sorteo!!!) | Guía de Jardín

Mujeres que compran flores: una novela sobre la amistad, divertida y muy adictiva (y nuevo sorteo!!!) | Guía de Jardín

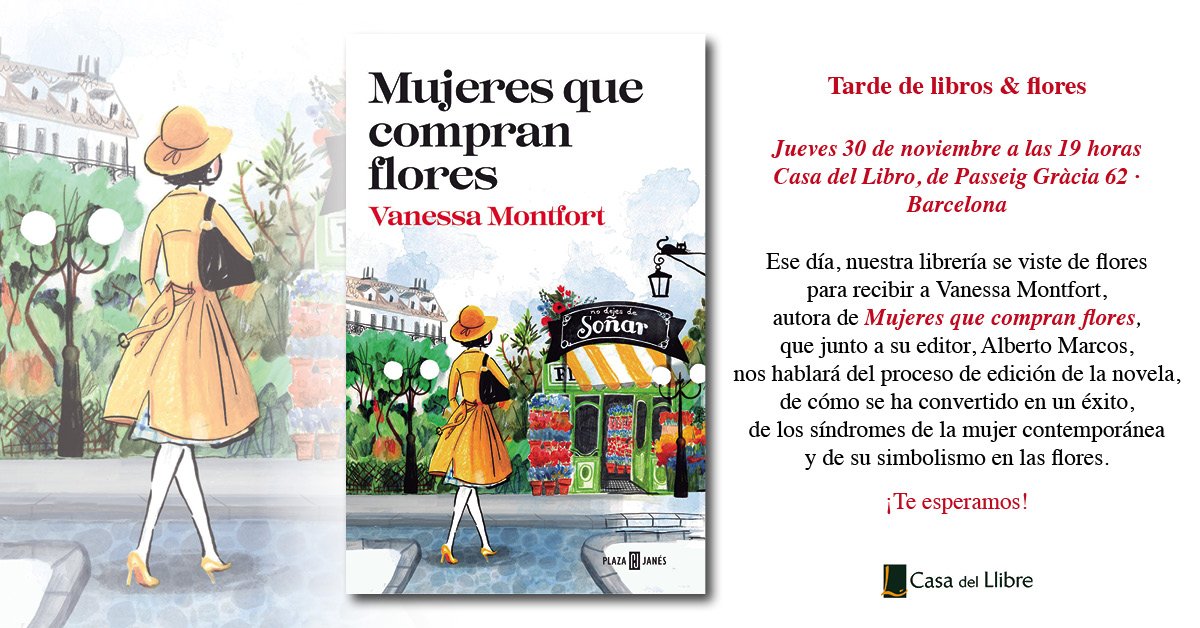

Casa del Libro on Twitter: "Se acerca la #SemanaDeLaMujerCDL. ¡¡¡Y tenemos muchas, pero que muchas sorpresas!!! Os adelantamos estas actividades. https://t.co/1lmIyD27Us" / Twitter